NPU traffic shape is opaque to the memory team. Arbitration weights get tuned by spreadsheet, not by workload.

NPU + Memory IP, tuned as one stack

AI traffic and DRAM,

scheduled together.

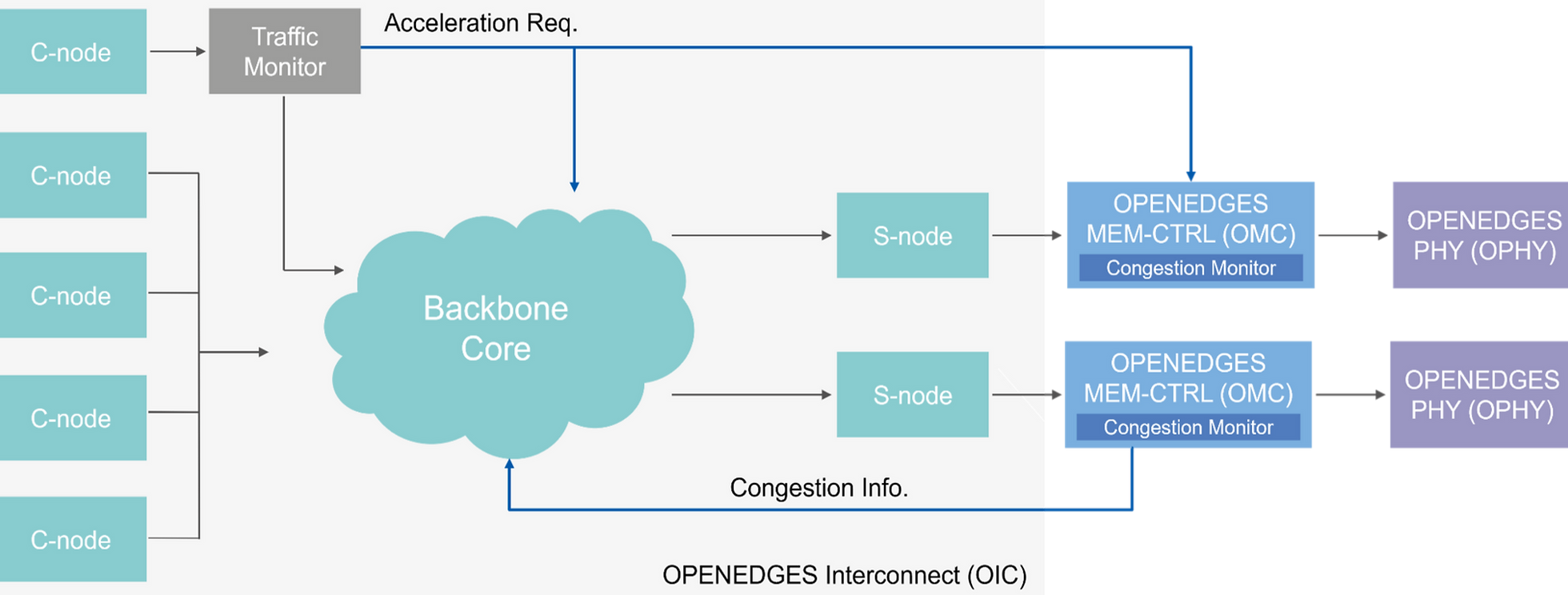

Most SoC teams license an NPU and a memory subsystem from different vendors and hope the traffic behaves. OPENEDGES tunes ENLIGHT NPU and ORBIT memory subsystem (OIC NoC, OMC controller, OPHY PHY) against the same workloads — coordinated in real time by ActiveQoS, so latency-sensitive flows hold their schedule under AI bursts.

The problem

NPU vendor blames the memory vendor. Memory vendor blames the NPU.

SoC teams license an NPU from one vendor and a memory subsystem from another, then spend the back half of integration arguing about whose traffic is breaking whose deadlines.

Camera, display, and safety paths slip when AI bursts hit DRAM — and no one owns the fix end-to-end.

Without coordinated scheduling, controllers idle while traffic queues. You paid for bandwidth you don't get.

Bug in production? Two vendors, two ticket queues, no shared accountability. Tape-out slips by quarters.

The solution

ENLIGHT computes. ORBIT moves the bytes. ActiveQoS keeps them in step.

Most SoC teams stitch an NPU and a memory subsystem from two vendors and absorb the integration risk. OPENEDGES owns both pillars and the scheduler that connects them — so AI workloads, real-time flows, and DRAM bandwidth share the same priority model from day one.

Compute

ENLIGHT NPUReal-time scheduler

ActiveQoSMemory subsystem

ORBIT01

Compute

ENLIGHT — inference NPU IP for edge SoCs.

4/8-bit mixed precision, transformer support, 8 TOPS to hundreds of TOPS via single-to-quad core scaling, full software toolkit (compiler, driver, ONNX/TFLite).

- INT8 / INT16 / INT32 and FP16 / FP32

- Customizable core count and TOPS scaling

- Layer partitioning and scheduling

- Compiler · device driver · integration kits

02

Memory

ORBIT — OIC NoC + OMC controller + OPHY PHY, tuned as one.

One licensable subsystem instead of three independently bolted blocks. Dynamic priority through ActiveQoS, ECC, ASIL-B credentials, and PHY availability across advanced and mature nodes.

- OIC — HyperPath NoC, ActiveQoS, ECC, ASIL-B

- OMC — LPDDR6/5X/5/4X · DDR5/4 · GDDR6 · HBM

- OPHY — LPDDR5X PHY at 5/7/12/16nm, HBM3 at 7/6nm

- Optional AES memory encryptor

ActiveQoS · real-time scheduler

The piece other vendors can't ship together.

ActiveQoS sits between ENLIGHT and ORBIT and re-prioritizes traffic in real time when AI bursts collide with latency-sensitive flows. It only works because the same team owns the NPU traffic shape and the DRAM scheduler.

- NPU-awareTraffic patterns from ENLIGHT feed the scheduler directly.

- DRAM-awareOMC out-of-order scheduling keeps utilization above 90%.

- Latency-safeDisplay, camera, and safety flows hold their deadlines.

01 · Compute

ENLIGHT — inference NPU IP for edge and AI SoCs.

Deep learning accelerator with strong compute density, 4/8-bit mixed precision, transformer support, single-to-quad core mapping from 8 TOPS to hundreds of TOPS, and a complete software toolkit.

- Customizable core size and performance scaling

- Layer partitioning and scheduling

- Compiler, device driver, and integration guides

- INT8/INT16/INT32 and FP16/FP32 data types

02 · Move

OIC — automated NoC generation with HyperPath routing.

Network-on-Chip IP for end-to-end interconnect generation, dynamic priority through ActiveQoS, ECC, at-speed BIST, ISO 26262 ASIL-B credentials, and meaningful area savings versus typical AXI backbones.

- HyperPath and LDA architecture

- ActiveQoS dynamic priority control

- ECC support and at-speed BIST

- Area-saving NoC for complex SoCs

03 · Schedule

OMC — out-of-order DRAM scheduling for utilization and latency.

Memory controller IP with proprietary out-of-order scheduling, over-90% DRAM utilization, low latency, configurable AXI ports and queue depths, AES memory encryption, and ActiveQoS integration.

- LPDDR6 / 5X / 5 / 4X / 4 support

- DDR5 / 4 / 3 and GDDR6 support

- HBM family scheduling

- Optional AES-based memory encryptor

04 · Interface

OPHY — programmable DDR PHY across advanced and mature nodes.

High-speed memory PHY IP with embedded microprocessor architecture, programmable timing at the PHY boundary, low read/write latency with OMC, and AI/HPC/mobile/automotive readiness.

- LPDDR5X / 5 / 4X / 4 PHY at 5nm, 7/6nm, 12nm, 16nm

- HBM3 PHY at 7/6nm

- GDDR6 PHY at 12nm

- DDR5 PHY at 5nm

ORBIT Memory Subsystem

NoC + DDR controller + DDR PHY, tuned as one stack.

ORBIT is the layer where interconnect, controller, and PHY behaviour are tuned together for real SoC traffic — not three separately licensed blocks bolted on top of each other.

The proof

Integration risk you don't have to absorb.

When the NPU and the memory subsystem come from the same team, the things SoC architects normally argue about — bandwidth headroom, latency under bursts, QoS arbitration, foundry support — land on one roadmap and one support contract.

Bandwidth utilization

> 90%OMC out-of-order scheduling holds DRAM utilization high while NPU traffic competes with latency-sensitive flows.

Functional safety

ASIL-BISO 26262 ASIL-B certification expanded to NoC IP in 2025 — automotive teams license the same blocks consumer SoCs already use.

Process coverage

5 / 7 / 12 / 16 nmOPHY DDR PHY available across advanced and mature nodes, plus HBM3 at 7/6nm and GDDR6 at 12nm.

Single roadmap

1 vendorNPU, NoC, controller, and PHY share one release schedule — instead of four vendors blaming each other when traffic misbehaves.

Trusted by

40+ SoC customers · 80+ ASIC / ASSP designs in production

The next step

Ready to evaluate ENLIGHT and ORBIT against your traffic?

Send your workload profile and target node. An OPENEDGES applications engineer responds within one business day with a datasheet pack and a fit assessment — no sales gate, no NDA required to start.

Or download the ENLIGHT and ORBIT datasheets · response within 1 business day · Seoul · Markham · Yokohama · San Jose

About OPENEDGES

KOSDAQ-listed memory and AI IP vendor since 2017 · 200+ employees across Korea, Canada, Japan, and the US · ISO 9001:2015 · ISO 26262 ASIL-B · Samsung SAFE IP partner · JEDEC member.